There are many projects using the laser on the contents on the web, so at first glance I don’t program and production of interactive contents ….? There is an increasing number of things to be said, but in fact I’m developing a lot!

However, due to the nature of the projects, there are many things that are hard to disclose.

I am disappointing that it is impossible to write concrete things because this project is completely experienced content in a non-public place …! There was content that I challenged technically, so I’ll write it down.

Production contents- VR experiment with many people

Multiplayer VR content of sound and video.

If you touch the projection surface, there will be a realistic sound.

I was in charge of implementing this content.

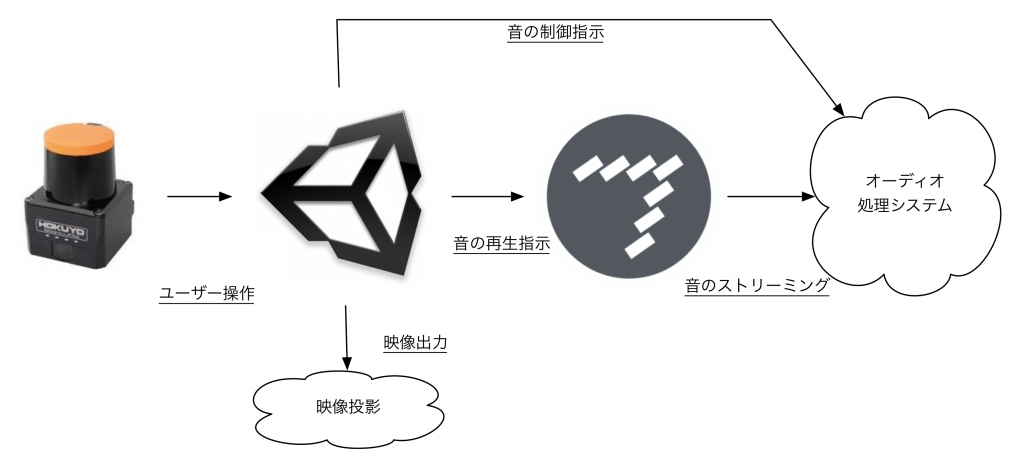

A mechanism is below:

Sound control method – adopted Max

In accordance with the touch operation, Unity specify the channel and the sound file to be played to Max app, and play the sound.

There is a maximum of 192 ch, and I do not use up all of 192 ch to full use, but as far as I had dealt with up to 8 ch at most, I could not image, so I verified the realization method.

Since interactive function was made with Unity, I was also considering using libraries such as nAudio (https://github.com/naudio/NAudio) so that it can be completed within Unity, but as it seems difficult to implement multiple channels and at the same time reproducing 8 sounds Because it was often interrupted, I decided to look for another realization method.

So I decided to use Max (https://cycling74.com/).

Max is easy to test multi-channel durability, in fact it is about 150 ch at the same time without going to 192 ch It was adopted because there was no interruption and play was possible if there was.

It is safe to be able to test easily without Max’s experience.

Max only needs to change the numerical value of the channel, and file switching was relatively easy.

Because I am not used to program connecting patches, it was hard for a while, but avoiding problems caused by lack of experience that you do not know Max’s rule by combining with external objects and javascript like plugins written in C ++ I did it.

Note: Although it may have been realized when nAudio was investigated further over time, I decided to give up because the delivery date was approaching, but in case of outputting sounds other than standard audio output such as audio interface , It is a very useful library for controlling sound with C #.

About touch detection

The touch function adopts a laser sensor because it is content to be experienced by many people.

I have been to this before, so I did not have much trouble.

It is an impression that it is easier to make a rough interaction of “degree of touch” than a fine interaction such as tap, double tap, drag.

Since it was necessary to detect the interaction (touch) with the image projected on a large wall, I made it so that I can debug with life size.

As for the touch, because there was a case where fingers were individually recognized depending on how to place the hands,

In order to prevent erroneous operation, coordinates of a certain range were grouped and processed.

Finally

I can not write about the contents quite easily, but this time I used Max for the first time.

Although it is not a flashy usage that puts an effect on the sound, the system itself is very stable and the contents itself seems to be popular.

I would like to do more work using Max from now.

As I was unfamiliar with Max, I had a hard time, so I’d like to write notes on production on blog.